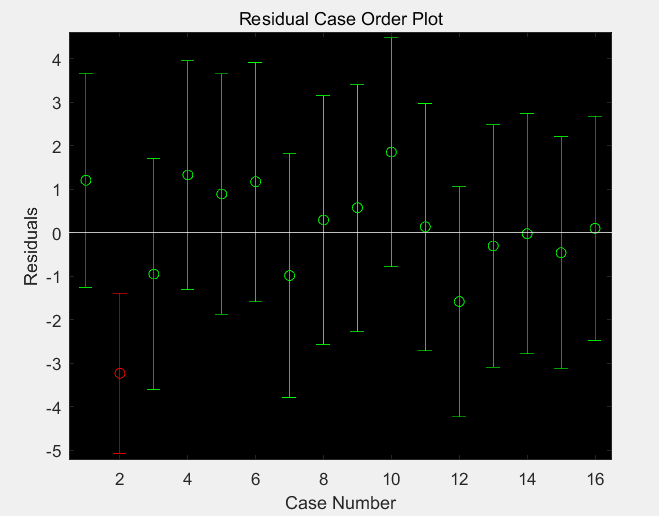

When all points fall on a downward slope, r = -1. When all points fall on a trend line with an upward slope, r = +1. The closer the correlation coefficient is to +1 or -1, the better the two variables "keep in step." This can be visualized by the degree to which the scatter cloud adheres to an imaginary trend line through the data. Pearson's correlation coefficient ( r ) is a statistic that quantifies the relationship between X and Y in unit-free terms. For insights into how to address outliers, please see Correlation Pearson's correlation coefficient ( r ) However, they should never be entirely ignored. In some instances, outliers should be excluded before analyzing the data and in other instances they should remain present during analysis. Identifying and dealing with outliers is an important statistical undertaking. Observations that do not fit the general data pattern are called outliers.

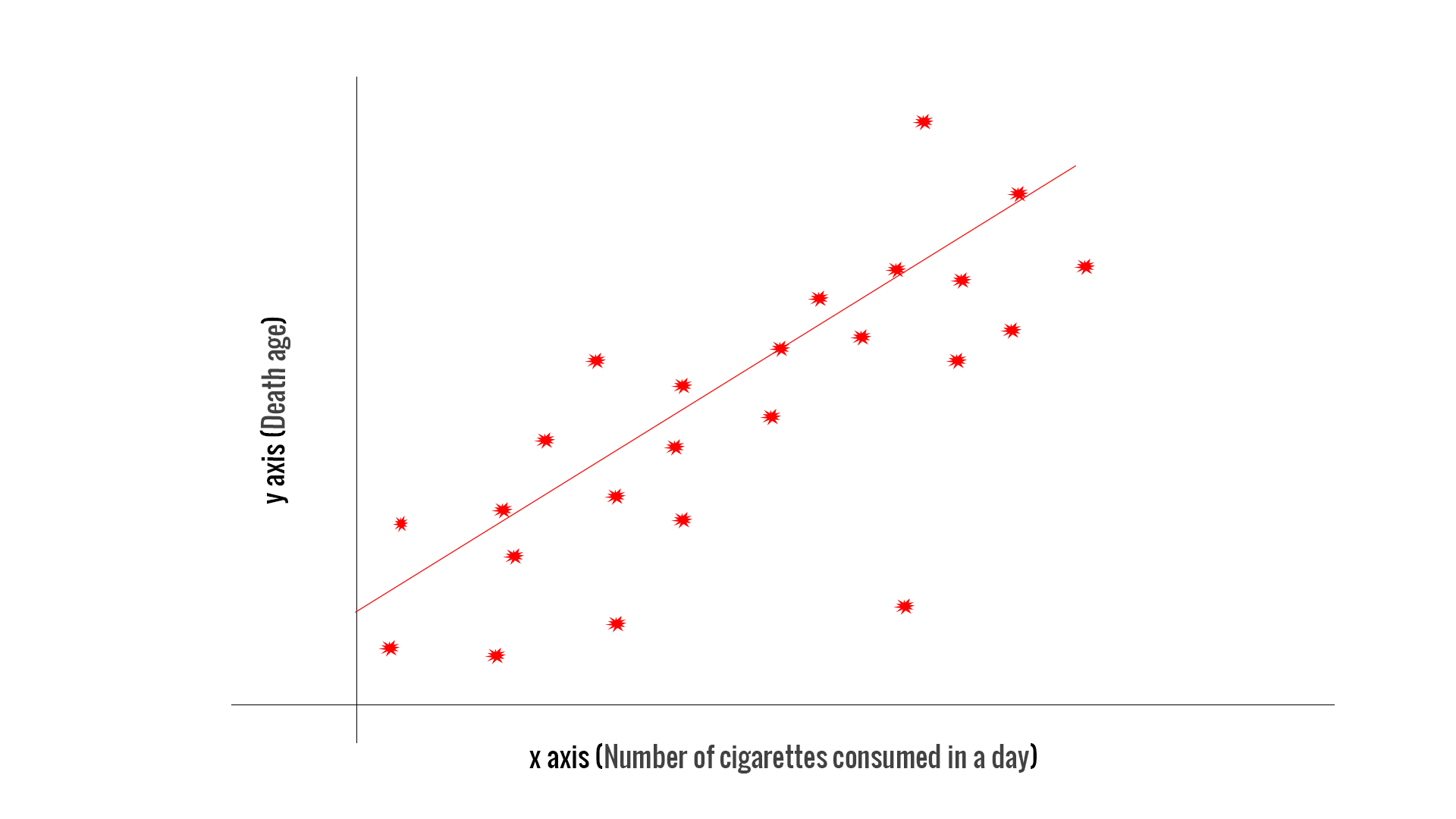

(Suggestion: Enter the illustrative data set into an SPSS file and produce this scatter plot.) Outliers SPSS: To draw a scatter plot with SPSS, click on Graphs | Simple | Scatter, and then select the variables you wish to plot. Negative correlation (high values of X associated with low values of Y),.Positive correlation (high values of X associated with high values of Y),.Thereby, a negative correlation is said to exist. That is, as the number of children receiving reduced-fee meals at school increases, the bicycle helmet use rate decreases. Notice that this graph reveals that high X values are associated with low values of Y. The scatter plot of the illustrative data set is shown below: This type of graph shows ( x i, y i ) values for each observation on a grid. The basis of both correlation and regression lies in bivariate ("two variable") scatter plots. X represents the percentage of children receiving free or reduced-fee meals at school. Y represents as the percentage of bicycle riders in the neighborhood wearing helmets. Data come from a study of bicycle helmet use ( Y ) and socioeconomic status (X). To illustrate both methods, let us use the data set called BICYCLE.SAV. In general, the dependent (outcome) is referred to as Y and the independent (predictor) variable is called X. This is used to analyze the relationship between two continuous variables. We will just address the tip of the iceberg for this topic, by basic linear correlation and regression techniques. In contrast, in the example where $X$ and $Z$ are $\pm1,$ learning $X = 1$ gives you no probabilistic information about $Y.$ It's still $\pm 1$ with 50-50 probability, just like before you knew $X.11: Correlation and Regression 11: Linear Correlation & RegressionĬorrelation and regression are complex and powerful statistical techniques that have wide application in data analysis. In the example with normal random variables, knowing $X=1$ tells you that $Y = \pm1,$ which is new information about $Y$ since it could have been any real number. The key is that dependence means knowing one variable tells you something about the the other. However, if you replace $X$ and $Z$ in my example with standard normal variables and set $Y = XZ,$ then $X$ and $Y$ are not independent (although they are uncorrelated). For instance, let $X$ and $Z$ be independent RVs, having value $1$ with probability $1/2$ and $-1$ with probability $1/2.$ Then let $Y = XZ.$ $Y$ depends on $X$ in a functional sense, but it is actually independent of $X$.

However, remember this rule of thumb is not always right. In this case, that rule of thumb is correct and others have given the computations and counterexamples demonstrating that. Your intuition is right that when one variable is a function of the other, they are usually not independent, (although they may be uncorrelated).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed